Introduction

This is the book I which I had when I made my transition from generalist Software Engineer to ML engineer at Deepmind.

I started it as interview preparation, but it quickly evolved into a more comprehensive list of skills I acquired during my time at Google Deepmind. A lot of the skills required in ML performance are scattered around in different resources, or have to be learnt on the job by talking to more experienced engineers. This book tries to assemble the most important bits in a single place.

I use Gemini extensively to refine my words (I am not a native english speaker) and to generate diagrams (with Nano Banana 3.)

Goals and Non-Goals

The book is an introduction to the most important concepts required to succeed in ML engineering. Its goal is to cover a large breadth of subjects but not to dive too deep into any single one. Most of the topics discussed are active research problems with new papers being published frequently. Building a T-shaped skillset - good knoweldge in a lot of subjects and expert knowledge in a handful of others - is often recommended for a successful career. Readers are encouraged to go and read the latest papers in the subjects that interested them the most.

Prerequisites

A good understanding of computer programming in Python is required. Linear algebra, Machine Learning, and distributed programming skills will greatly help understanding the material but are not necessary.

Structure

The book gradually introduces concepts that build on top of each other as the chapters go by. We first introduce the basic APIs that are commonly used to build ML models. After that, we have a small chapter discussing the backward pass and its performance implications. Then, we discuss concurrency on modern hardware, how to leverage the different levels of concurrency, and how to think about and estimate performance. This leads us up to discussing multi-device distributed computations, what are the primitive operations and the common strategies for distributing ML models. We finish by introducing commonly used techniques that leverage everything we have discussed to serve LLMs efficiently at scale.

Playing Along

We try to add code examples whenever possible. Feel free to copy-paste to a Jupyter Notebook such as Google Colab or into your code editor to run the code and play with it. Some code examples in the Distributed section are conceptual pseudocode only and therefore will not work by themselves.

Contributing

Contributions to the GitHub Repository would be very much appreciated!

Array Programming Fundamentals

This chapter introduces array programming fundamentals using NumPy. While modern deep learning often happens in frameworks like PyTorch or JAX, NumPy remains the lingua franca of the Python data ecosystem. Crucially, the NumPy API provides the conceptual foundation for the tensor operations used in PyTorch and is adopted directly by JAX (jax.numpy). By mastering NumPy, you are learning not just a library, but the mental model required to manipulate high-dimensional data and understand the vectorized operations that drive ML performance.

What is an Array ?

At a high level, an array is an abstract representation of an n-dimensional arrangement of numbers. All the numbers share the same underlying data type (for instance, int32.) It exposes 4 necessary pieces of information:

- The Data Pointer: The memory address where the data begins.

- The Dtype: The type of every element (e.g., int32, float16). This tells the CPU how many bytes to read per element.

- The Shape: The logical dimensions of the array.

- The Stride: An extra piece of information that specifies the number of bytes to step in each dimension to reach the next element. This decouples the data layout from the logical shape, allowing for zero-copy operations like transposing.

The Physical Reality

Under the hood, the data is simply a 1D buffer of contiguous memory. The shape and dtype are used to calculate the total memory required for allocation.

For example, in C, the allocation looks like this:

// A (10, 10) matrix and a (100,) vector allocate the exact same memory.

size_t size = product(shape) * dtype.itemsize;

void* buffer = malloc(size);

To the memory allocator, the shape is irrelevant. It only cares about the total number of bytes. The shape is a logical construct used by the software to:

- Compute real memory addresses: It maps logical coordinates \((x, y)\) to a flat memory offset.

- Determine validity: It prevents accessing memory outside the allocated buffer (bounds checking).

- Define semantics: It dictates how operations broadcast across dimensions.

The CPU finds an element at logical index (i, j) using this fundamental formula:

address = data_pointer + (i * stride[0]) + (j * stride[1])

Creating Arrays

We can build an array from a python list:

import numpy as np

data = [[0, 1], [2, 3]]

arr = np.array(data, dtype=np.int32)

print(arr, arr.shape)

stdout

[[0 1]

[2 3]] (2, 2)

We can also build an array from a simple scalar

import numpy as np

scalar = np.array(10, dtype=np.int32)

print(scalar.shape)

stdout

()

Basic Operators

Most operators applicable to scalars have also been implemented on arrays thanks to operator overloading. The requirement is that all the shapes must match. We will explore cases where shapes do not match in the next chapter about Broadcasting.

Important: In NumPy, the * operator represents element-wise multiplication (the Hadamard product), not matrix multiplication. For matrix multiplication, use @ or np.matmul.

import numpy as np

shape = (4, 2, 3)

# An array full of 1 of shape (4, 2, 3)

ones = np.ones(shape)

# An array full of 2 of shape (4, 2, 3)

twos = np.full(shape, 2)

print(f'{ones + twos=}')

print(f'{ones - twos=}')

print(f'{ones * twos=}')

print(f'{ones / twos=}')

print(f'{ones // twos=}')

print(f'{ones == twos=}')

stdout

ones + twos=array([[[3., 3., 3.], ...

ones - twos=array([[[-1., -1., -1.], ...

ones * twos=array([[[2., 2., 2.], ...

ones / twos=array([[[0.5, 0.5, 0.5], ...

ones // twos=array([[[0., 0., 0.], ...

ones == twos=array([[[False, False, False], ...

In-Place Update vs New Allocations

The examples above created each new memory allocations. This is wasteful if one of the operands is not going to be needed afterwards. We can use in place reassignment operators like += to update the left hand side argument, thus not allocating new memory.

For instance

import numpy as np

shape = (4, 2, 3)

# An array full of 1 of shape (4, 2, 3)

ones = np.ones(shape)

# An array full of 2 of shape (4, 2, 3)

twos = np.full(shape, 2)

# Update ones value with ones + twos

ones += twos

print(f'{ones=}')

stdout

ones=array([[[3., 3., 3.], ...

Matmul

We can also run matrix multiplications between two n-dimensional tensors.

- For the operation to be valid, the last dimension of the first array needs to match the dimension of the penultimate dimension of the second array.

- The operator is

@, we can also usenp.matmul

import numpy as np

ones = np.random.normal(size=(4, 12, 64, 32))

twos = np.random.normal(size=(4, 12, 32, 16))

print(f'{(ones @ twos).shape=}')

stdout

(ones @ twos).shape=(4, 12, 64, 16)

Type Promotion (Upcasting)

When you apply an operator to two arrays of different data types, NumPy cannot simply guess which type to use. Instead, it follows a strict set of rules called Type Promotion (or upcasting) to find the smallest data type that can safely represent the result of the operation.

The general hierarchy is: bool -> int -> float.

How it works

NumPy looks for the "common denominator" that prevents data loss:

int32+int32->int32int32+float32->float64(Safe default behavior)float32+float16->float32

import numpy as np

shape = (4, 2, 3)

# 1s of type int32

ints = np.ones(shape, dtype=np.int32)

# 2s of type float32

floats = np.full(shape, 2, dtype=np.float32)

print(f'{(ints + ints).dtype=}')

print(f'{(ints + floats).dtype=}')

print(f'{(floats + floats).dtype=}')

stdout

(ints + ints).dtype=dtype('int32')

(ints + floats).dtype=dtype('float64')

(floats + floats).dtype=dtype('float32')

Broadcasting

We said in the previous chapter that arrays must have the same shape to apply element wise operators. This is not exactly true.

- If one of the axes is exactly

1, this axis will be replicated along the corresponding axis on the other array.- This means that we can add

[4, 2] + [1, 2]

- This means that we can add

- If one array has less dimension than the other,

NumPywill read both shapes right to left as long as the dimensions match, or if one of them is 1. Then it will virtually add1sized dimension to the smaller array.- This means that we can add

[32, 64, 64] + [64, 64] - We can add any scalar to any array

- We cannot implicitly add

[4, 2] + [4,], we need to first add a dimension ourselves[4, 2] + [4,][:, None]

- This means that we can add

Performance Note: The replication is virtual. NumPy sets the stride to 0 for broadcasted dimensions, meaning the data is not physically copied. A broadcasted axis is "free" in terms of memory.

We can add new axes of size one by slicing the array with an extra None or np.newaxis at the required position. We can also simply call arr.reshape(newshape).

import numpy as np

# An array full of 1 of shape (4, 2)

ones = np.ones((2, 4, 2))

# Shape (2, 2)

toadd = np.array([[0, 5], [10, 20]])

# Reshape from (2, 2) to (2, 1, 2)

toadd = toadd.reshape(2, 1, 2)

# Alternatively, we could write toadd = toadd[:, None, :]

print(f'{ones + toadd=}')

stdout

ones + toadd=array([[[ 1., 6.],

[ 1., 6.],

[ 1., 6.],

[ 1., 6.]],

[[11., 21.],

[11., 21.],

[11., 21.],

[11., 21.]]])

Broadcasting is used in many cases to scale an array or to apply a bias on a whole axis.

1D Masking

It is also widely used for masking. Let's look at a concrete example. We have a matrix with 1024 rows and 256 columns, we know that the 30 last rows are padding and contain garbage values.

NumPy comes with a very convenient function called np.arange(size) which creates an array of shape (size,) where each value is its index. We can use it to create a mask to keep the first first 994 elements by doing np.arange(arr.shape[0]) < non_padded.

import numpy as np

# Matrix: (1024 rows, 256 cols)

arr = np.random.normal(size=(1024, 256))

padding = 30

valid_rows = arr.shape[0] - padding

# Create a column vector mask: Shape (1024, 1)

# 1. np.arange creates (1024,)

# 2. Comparison creates boolean (1024,)

# 3. Slicing [:, None] adds the axis -> (1024, 1)

mask = (np.arange(arr.shape[0]) < valid_rows)[:, None]

# Broadcast: (1024, 256) * (1024, 1)

# The mask is virtually replicated across all 256 columns

masked_arr = arr * mask

# Sum down the rows (collapsing axis 0)

# Result is (256,) containing the sum of valid elements for each column

print(masked_arr.sum(axis=0).shape) # (256,)

2D Masking

It is also extremely common in LLMs to build a 2D mask for the attention mechanism. Tokens are only allowed to attend to themselves and to the tokens that came before them. Using broadcasting we can easily build this mask:

import numpy as np

seq_len = 4

# Create indices [0, 1, 2, 3]

indices = np.arange(seq_len)

# Logic: Is query position (i) >= key position (j)?

# (4, 1) >= (1, 4) -> Broadcasts to (4, 4)

is_causal = indices[:, None] >= indices[None, :]

# Create the additive mask

# 0.0 for valid, -inf for invalid (to be zeroed by softmax later)

mask = np.where(is_causal, 0.0, -np.inf)

print(mask)

stdout

[[ 0. -inf -inf -inf]

[ 0. 0. -inf -inf]

[ 0. 0. 0. -inf]

[ 0. 0. 0. 0.]]

Implementing a matrix multiplication with broadcasting

Some algorithms like Gated Linear Attention use a broadcasted multiplication followed by a reduction to implement a matrix multiplication in order to maintain better numerical stability even though the performance is worse and it cannot be done on accelerated tensor cores.

# A: (32, 64)

# B: (64, 16)

a = np.random.normal(size=(32, 64))

b = np.random.normal(size=(64, 16))

# 1. Expand A to (32, 64, 1)

# 2. Expand B to (1, 64, 16)

# 3. Broadcast Multiply -> Result is (32, 64, 16)

intermediate = a[:, :, None] * b[None, :, :]

# 4. Sum over the middle dimension (k=64)

out = intermediate.sum(axis=1)

print(f'{intermediate.shape=}')

print(f'{out.shape=}')

# Verify against standard MatMul

np.testing.assert_almost_equal(out, a @ b)

stdout

intermediate.shape=(32, 64, 16)

out.shape=(32, 16)

Slicing

Slicing allows taking a view of subset of an array. Most slicing operations will not allocate extra memory, they will create a new view into the original buffer with a different starting address, a new shape, and a different stride.

Syntax

The API to slice an array revolves around the overloaded indexing operator ([]).

Single Axis

For an array with a single axis, it behaves exactly like a normal python list. We can

- Get a single scalar by specifying its index

arr[2] - Use negative indexing to index from right to left

arr[-1] - Use a slice object to get multiple indices (from start to end with the last index excluded.)

arr[3:7]orslice(3, 7). - Add a step to the slice object to how many indices to skip between two elements.

arr[3:7:2]will get elements at indices3and5.arr[7:3:-1]is the reversed version ofarr[3:7].

Performance Note: When you use a step (e.g., ::2), NumPy simply doubles the stride in the metadata. The memory is untouched.

import numpy as np

arr = np.arange(10)

print(f'{arr[2]=}')

print(f'{arr[-1]=}')

print(f'{arr[3:7]=}')

print(f'{arr[slice(3, 7)]=}')

print(f'{arr[3:7:2]=}')

print(f'{arr[7:3:-1]=}')

stdout

arr[2]=np.int64(2)

arr[-1]=np.int64(9)

arr[3:7]=array([3, 4, 5, 6])

arr[slice(3, 7)]=array([3, 4, 5, 6])

arr[3:7:2]=array([3, 5])

arr[7:3:-1]=array([7, 6, 5, 4])

Out of Bound

- Out of bound access to a scalar is illegal,

np.arange(10)[100]raises anIndexError. - But out of bound slicing is fine

np.arange(10)[100:1]will just return an empty array (shape = (0,)).

Multiple Axes

Arrays with multiple axes can be sliced using the same mechanism:

import numpy as np

arr = np.arange(10).reshape(5, 2)

print(f'{arr=}')

print(f'{arr[2]=}')

print(f'{arr[1:5]=}')

print(f'{arr[1:5:2]=}')

stdout

arr=array([[0, 1],

[2, 3],

[4, 5],

[6, 7],

[8, 9]])

arr[2]=array([4, 5])

arr[1:5]=array([[2, 3],

[4, 5],

[6, 7],

[8, 9]])

arr[1:5:2]=array([[2, 3],

[6, 7]])

We can also slice multiple axes at once by separating them with a coma ,:

import numpy as np

arr = np.arange(12).reshape(4, 3)

print(f'{arr=}')

print(f'{arr[2, 1]=}')

print(f'{arr[2, 1:3]=}')

print(f'{arr[2:4, 1:3]=}')

stdout

arr=array([[ 0, 1, 2],

[ 3, 4, 5],

[ 6, 7, 8],

[ 9, 10, 11]])

arr[2, 1]=np.int64(7)

arr[2, 1:3]=array([7, 8])

arr[2:4, 1:3]=array([[ 7, 8],

[10, 11]])

We can slice a full axis by inserting : in its position. If we provide less indices than we have axes, NumPy will automatically append : to the missing axes as we have seen earlier. If we just want to slice the last indices and take a full view of the first ones, we can use the ... syntax (ellipsis.)

import numpy as np

arr = np.arange(12).reshape(2, 3, 2)

print(f'{arr=}')

print(f'{arr[:, -1, :]=}')

print(f'{arr[:, -1]=}')

print(f'{arr[..., -1]=}')

print(f'{arr[..., -1, -1]=}')

stdout

arr=array([[[ 0, 1],

[ 2, 3],

[ 4, 5]],

[[ 6, 7],

[ 8, 9],

[10, 11]]])

arr[:, -1, :]=array([[ 4, 5],

[10, 11]])

arr[:, -1]=array([[ 4, 5],

[10, 11]])

arr[..., -1]=array([[ 1, 3, 5],

[ 7, 9, 11]])

arr[..., -1, -1]=array([ 5, 11])

Mutation

Since a slice is just a window into the same memory, modifying the slice modifies the original array.

import numpy as np

original = np.zeros(5)

slice_view = original[0:2]

# Modify the slice

slice_view[:] = 100

print(f'{original=}') # Original is changed!

stdout

original=array([100., 100., 0., 0., 0.])

If you need to modify a slice without affecting the original, you must explicitly call .copy().

Indexing

We explored slicing in the previous chapter. Building on this, we now look into indexing.

Indexing uses the same syntax as slicing, but instead of using a slice object or an int, we use another array for indexing.

While Slicing returns a View (instant, no memory cost), Indexing triggers a Copy.

Integer Array Indexing

We can use an array of integers for indexing. NumPy will return the elements at the requested indices on the requested axis.

import numpy as np

arr = np.arange(8).reshape(2, 4)

print(f'{arr=}')

print(f'{arr[:, np.array([0, 3])]=}')

stdout

arr=array([[0, 1, 2, 3],

[4, 5, 6, 7]])

arr[:, np.array([0, 3])]=array([[0, 3],

[4, 7]])

In ML frameworks like PyTorch or JAX, this specific operation (indexing a high-dimensional tensor with a list of indices) is often called gather or take. It is expensive because the hardware must "jump around" in memory to collect the rows.

Boolean Array Indexing (masking)

If you index using an array of Booleans, NumPy selects elements where the index is True.

This is widely used in ML for Filtering (e.g., for implementing ReLU).

Note: The result of boolean indexing is always a 1-D array, because the True values might not form a rectangular shape.

import numpy as np

# Model predictions (logits)

logits = np.array([-1.5, 2.0, -0.1, 5.2])

# Create a boolean mask for positive values (Simulating ReLU)

mask = logits > 0

# Select only positive values

positive_activations = logits[mask]

print(f'{mask=}')

print(f'{positive_activations=}')

stdout

mask=array([False, True, False, True])

positive_activations=array([2. , 5.2])

In-Place Mutation

While extracting data (b = a[indices]) creates a copy, assigning data (a[indices] = 0) works in-place. This is highly efficient.

import numpy as np

# Feature map

features = np.array([10, 20, 30, 40, 50])

# Indices to "drop out"

drop_indices = [0, 3]

# Modify IN PLACE (No copy created)

features[drop_indices] = 0

print(f'{features=}')

stdout

features=array([ 0, 20, 30, 0, 50])

Indexing vs Slicing Summary

| Operation | Syntax | Type | Memory Cost | Speed |

|---|---|---|---|---|

| Slicing | arr[0:5] | View | ✅ Nearly Zero | ⚡ Instant |

| Indexing | arr[[0, 1, 2]] | Copy | ❌ Linear O(N) | 🐢 Slower (Memory Bound) |

Reshaping And Transposing

It is very common to want to change how interpret our data. For instance, we might want to flatten a (28, 28) image into a single (784,) vector.

Both reshape and transpose are designed to be metadata-only operations. They change the metadata (shape and stride) without touching the underlying buffer.

Reshaping

- Reshaping changes the logical dimensions of the array while keeping the total number of elements constant.

- It only changes the logical shape, the values at physical indices remain constant.

- For instance, if we reshape from

(10,)to(5, 2), the value at indexarr[2]before reshape will be the same as the value at indexarr[1, 0]ater the reshape.

- For instance, if we reshape from

- The product of the new shape must equal the product of the old shape.

prod(new_shape) == prod(old_shape).

import numpy as np

original = np.arange(12).reshape(2, 3, 2)

reshaped = original.reshape(3, 4)

print(f"{original.shape=}, {original.strides=}")

print(f"{reshaped.shape=}, {reshaped.strides=}")

print(f"{original=}")

print(f"{reshaped=}")

stdout

original.shape=(2, 3, 2), original.strides=(48, 16, 8)

reshaped.shape=(3, 4), reshaped.strides=(32, 8)

original=array([[[ 0, 1],

[ 2, 3],

[ 4, 5]],

[[ 6, 7],

[ 8, 9],

[10, 11]]])

reshaped=array([[ 0, 1, 2, 3],

[ 4, 5, 6, 7],

[ 8, 9, 10, 11]])

Conveniently, we do not have to write out all the dimensions when we reshape. Passing -1 will infer the size of the remaining dimension.

# We have a buffer of 7840 elements

data = np.arange(7840)

# We want 28x28 images, but we don't want to manually calc the batch size.

# NumPy calculates: 7840 / (28 * 28) = 10

formatted = data.reshape(-1, 28, 28)

print(f'{formatted.shape=}') # (10, 28, 28)

stdout

formatted.shape=(10, 28, 28)

Transposing

Transposing swaps axes. It means that after a transposition, elements in the array have logically moved.

Let's imagine an array of shape (10, 32, 64).

- Let's transpose the last two axes (we can use

swapaxes(1, 2)). The array becomes(10, 64, 32). The value at index[0, 1, 2]will now be at index[0, 2, 1]. - As mentioned earlier, no data is actually moved, we just change the stride of the array.

- There are many APIs for transposing.

- Arrays with one or two dimensions can use

.transpose()or.T. - Any array can use

.transpose(*indices)(equivalent to permute inPyTorch) where indices maps the new axes to the old axes. For instance(10, 32, 64).transpose(2, 0, 1)becomes(64, 10, 32). - Any array can use

.swapaxes(axis1, axis2)to swap the two axes provided.

- Arrays with one or two dimensions can use

import numpy as np

original = np.arange(10).reshape(2, 5)

# Transpose

transposed = original.T

print(f"{original.shape=}, {original.strides=}")

print(f"{transposed.shape=}, {transposed.strides=}")

print(f"{original=}")

print(f"{transposed=}")

stdout

original.shape=(2, 5), original.strides=(40, 8)

transposed.shape=(5, 2), transposed.strides=(8, 40)

original=array([[0, 1, 2, 3, 4],

[5, 6, 7, 8, 9]])

transposed=array([[0, 5],

[1, 6],

[2, 7],

[3, 8],

[4, 9]])

The Performance Trap: Contiguity

NumPy arrays are laid out in Row-Major order (C-style) by default. This means iterating over the last dimension is stepping 1 item at a time in memory (contiguous).

When you Transpose, you break this contiguity. The stride of the last dimension is no longer 1.

- Reshaping a Contiguous Array: Free (View).

- Reshaping a Non-Contiguous Array: Expensive (Force Copy).

If you attempt to reshape an array that has been transposed, NumPy is often forced to physically copy the data into a new, contiguous buffer to satisfy the reshape request.

| Operation | Action | Cost |

|---|---|---|

| reshape | Updates shape/strides | ✅ Free (usually) |

| transpose | Swaps shape/strides | ✅ Free (always) |

| reshape after transpose | Reorganizes Memory | ❌ Expensive (Copy) |

Einsums

Einsums are the lifeblood of tensor arithmetic in ML. They provide a clear syntax to express high dimensional tensor operations. Furthermore, they are often more efficient than using a mix of traditional operators because linear algebra libraries are able to reorder the operations to minimize the materialized size.

Syntax

We write an einsum using np.einsum(subscripts, *operands) function.

subscriptsis a python string defining the operation to apply to the operands.- The string is formatted as such

dims_1,dims_2,...->dims_out - We give a name to each dimension of each operand for instance a batch of images could be

bwh(batch, width, height.) - We separate operands with

,. For instancebwh,whd(wheredis the model dimension.) - We specify the output dimensions after

->. For instancebwh,whd->bd.

- The string is formatted as such

*operandsare an arbitrary amount of arrays to which the operation will be applied. For instancenp.einsum('bwh,whd->bd', images, weights)

Understanding Einsums

- Repeating Letters: If an index appears in two inputs (e.g.,

jinij,jk), it implies multiplication along that dimension. - Omitted Letters (Reduction): If an index appears in the input but not the output, it is summed over (reduced).

- Output Order: You can rearrange the output dimensions arbitrarily (e.g.,

ij->jiis a transpose).

| Operation | Standard API | Einsum Notation |

|---|---|---|

| Transpose | A.T | ij -> ji |

| Sum | A.sum() | ij -> |

| Column Sum | A.sum(axis=0) | ij -> j |

| Dot Product | a @ b | i, i -> |

| Matrix Mul | A @ B | ik, kj -> ij |

| Batch MatMul | A @ B | bik, bkj -> bij |

| Outer Product | np.outer(a, b) | i, j -> ij |

Broadcasting with Ellipsis (...)

In Deep Learning, we often write code that shouldn't care about the number of batch dimensions (e.g., handling both (batch, sequence, feature) and (batch, sequence, num_heads, feature)).

einsum supports ... to represent "all other dimensions".

# Apply a linear layer (Weights: i, j) to a tensor

# of ANY shape ending in 'i'

# ...i, ij -> ...j

output = np.einsum('...i,ij->...j', input_tensor, weights)

Code Examples

import numpy as np

batch = 10

width = 28

height = 64

d_model = 512

images = np.random.normal(size=(batch, width, height))

weights = np.random.normal(size=(width, height, d_model))

print(f"{np.einsum('bwh,whd->bd', images, weights).shape=}")

stdout

np.einsum('bwh,whd->bd', images, weights).shape=(10, 512)

This reduces both the width and the height. But we could also just reduce the width for instance, batch the height and write the output in a different order. For instance bwh,whd->dbh.

stdout

np.einsum('bwh,whd->dbh', images, weights).shape=(512, 10, 64)

Path Optimizations

When multiplying three or more matrices, the order of operations matters significantly for memory.

(A @ B) @ C vs A @ (B @ C)

If A is (1000, 2), B is (2, 1000), and C is (1000, 1000):

A @ Bcreates a(1000, 1000)intermediate matrix (1M elements.)B @ Ccreates a(2, 1000)intermediate matrix (2k elements.)

The second path is orders of magnitude more memory efficient. np.einsum (with optimize=True) automatically finds this path.

import numpy as np

# A chain of 3 matrix multiplications

# Dimensions chosen to make one path disastrously memory heavy

a = np.random.normal(size=(1000, 2))

b = np.random.normal(size=(2, 1000))

c = np.random.normal(size=(1000, 1000))

# Naive chaining (Left-to-Right)

# Creates (1000, 1000) intermediate!

res_naive = (a @ b) @ c

# Einsum Optimization

# Automatically detects that contracting (b, c) first is cheaper

res_einsum = np.einsum('ij,jk,kl->il', a, b, c, optimize=True)

np.testing.assert_allclose(res_naive, res_einsum)

Code Visualization

einsum can be difficult to debug. It helps to visualize it as a nested loop.

Single reduced dimension

Let's visualize bwh,whd->db. We are reducing w and h, and transposing the result to d, b.

import numpy as np

batch = 10

width = 28

height = 64

d_model = 512

images = np.random.normal(size=(batch, width, height))

weights = np.random.normal(size=(width, height, d_model))

manual_out = np.zeros((d_model, batch, height))

# One loop per non reduced dimension

for b in range(batch):

for h in range(height):

for d in range(d_model):

manual_out[d, b, h] = images[b, :, h] @ weights[:, h, d]

einsum_out = np.einsum('bwh,whd->dbh', images, weights)

np.testing.assert_almost_equal(manual_out, einsum_out)

We loop over all our batch dimensions, we extract vectors of size w that we dot product and write at the correct (transposed) output dimension.

Multiple Reduced Dimension

The bwh,whd->db einsum is more interesting because it reduces both w and h. Concretely, the only difference with the above einsum is that we will revisit the same d, b output tile multiple times, so we need to reduce intermediate dot products into their corresponding output indices.

import numpy as np

manual_out = np.zeros((d_model, batch))

# One loop per non reduced dimension

for b in range(batch):

for h in range(height):

for d in range(d_model):

# The 'w' dimension is reduced via the dot product (@)

# We accumulate (+=) because 'h' is also being reduced

manual_out[d, b] += images[b, :, h] @ weights[:, h, d]

einsum_out = np.einsum('bwh,whd->db', images, weights)

np.testing.assert_almost_equal(manual_out, einsum_out)

Exercises

Let's practice now some einsum functions!

Outer Product

The outer product takes two vectors and produces a matrix, by multiplying every element of the first vector by every element of the second vector.

import numpy as np

size = 10

a = np.ones(size)

b = np.ones(size)

res = np.einsum('your_einsum', a, b) # <-- einsum here

desired = np.outer(a, b)

np.testing.assert_array_equal(res, desired)

print(f"{res.shape=}")

Solution

res = np.einsum('i,j->ij', a, b)

Dot Product

The dot product is the sum of the products of elements at corresponding indices between two vectors of the same size.

\[\mathbf{a} \cdot \mathbf{b} = \sum_{i=1}^{n} a_i b_i = a_1 b_1 + a_2 b_2 + \cdots + a_n b_n\]

import numpy as np

size = 10

a = np.ones(size)

b = np.ones(size)

res = np.einsum('your_einsum', a, b) # <-- einsum here

desired = np.dot(a, b)

np.testing.assert_array_equal(res, desired)

print(f"{res.shape=}")

Solution

res = np.einsum('i,i->', a, b)

Inner Product

The inner product is the same operation as dot-product when performed on two vectors.

When applied to matrices, we take every row from the first matrix and calculate the dot product against every row of the second matrix. This results in a matrix where each entry (i, j) tells us how aligned the i-th row of the first matrix is with the j-th row of the second.

import numpy as np

size = 10

a = np.ones((size, 2*size))

b = np.ones((size, 2*size))

res = np.einsum('your_einsum', a, b) # <-- einsum here

desired = np.inner(a, b)

np.testing.assert_array_equal(res, desired)

print(f"{res.shape=}")

Solution

res = np.einsum('ik,jk->ij', a, b)

Path Optimizations

Let's implement an einsum between three 2-dimensional tensors.

import numpy as np

batch = 100

dim_in = 10

dim_hidden = 1000

dim_out = 20

x = np.ones((batch, dim_in))

w_in = np.ones((dim_in, dim_hidden))

w_out = np.ones((dim_hidden, dim_out))

res = np.einsum('your_einsum', x, w_in, w_out) # <-- einsum here

desired = x @ w_in @ w_out

np.testing.assert_array_equal(res, desired)

print(f"{res.shape=}")

Solution

Make sure you use the optimal way to multiply the matrices with optimize=True.

res = np.einsum('bi,ih,ho->bo', x, w_in, w_out, optimize=True)

Tensor dot product

Let's implement an einsum between two 3-dimensional tensors.

We want to contract along the common dim_model dimension.

import numpy as np

batch = 100

sequence = 10

dim_model = 1000

n_heads = 2

head_dim = dim_model // n_heads

x = np.ones((batch, sequence, dim_model))

w = np.ones((dim_model, n_heads, head_dim))

res = np.einsum('your_einsum', x, w) # <-- einsum here

desired = (x[:, None, :, :] @ w.transpose(1, 0, 2)).transpose(0, 2, 1, 3)

np.testing.assert_array_equal(res, desired)

print(f"{res.shape=}")

Solution

res = np.einsum('bsd,dnh->bsnh', x, w)

Practice: Implementing An LLM's Forward Pass

We have now covered NumPy's most important APIs. Let's use them to implement the hottest model architecture: the Transformer.

Specifically, we are implementing a decoder-only transformer similar to LLAMA. We use RoPE for our positional encoding.

A major difference with LLAMA is that we use post-norm instead of pre-norm. We normalize after the attention mechanism and residual instead of before each block. We do this for convenience but you will almost never see this in real life.

Embedding Lookup

The input to our model is a one dimensional array of integers. These integers correspond to token ids, we use these token ids to retrieve the corresponding embeddings for each token in our input.

Let's define our sequence length (the number of ids) as 256. Our vocabulary size is 32768, this is the total count of possible token ids, anything above this value will be incorrect. Our model dimension is 512.

You can edit the next snippets or copy-paste the code into a Jupyter Notebook like Google Colab.

We provide a random initialization of the input token ids and the vocab. Implement the lookup method to map the token ids to their corresponding embeddings. You click on the solution button below to reveal the solution.

import numpy as np

sequence_length = 256

vocab_size = 32768

model_dim = 512

# -- Initiate Random Values --

# (sequence_length,)

input_token_ids = np.random.randint(0, vocab_size, size=(sequence_length,))

# (vocab_size, model_dim)

vocab = np.random.normal(size=(vocab_size, model_dim)).astype(np.float16)

def embeddings_lookup(input_ids, vocab) -> np.ndarray:

# -- Your Code --

...

Solution

def embeddings_lookup(input_ids, vocab) -> np.ndarray:

return vocab[input_ids]

# (sequence_length, model_dim)

embeddings = embeddings_lookup(input_token_ids, vocab)

Attention Mechanism

Now that we have our embeddings, we pass them through the multi-head attention mechanism. It is the crux of the transformer architecture.

Q,K,V projections

The first thing we need to do is multiply our token embeddings with the trained \(Q\), \(K\), \(V\) weights. Since we are using the multi-head attention architecture, the weights are split into num_heads heads of shape head_dim. They all share the same shape (model_dim, num_heads, head_dim) so that they can be multiplied to our input embeddings.

In the next snippet, we initialize the weights, and you implement the \(Q\), \(K\), \(V\) projections.

num_heads = 4

head_dim = 64

attn_shape = (model_dim, num_heads, head_dim)

# -- Initiate Random Values --

q_weights = np.random.normal(size=attn_shape).astype(np.float16)

k_weights = np.random.normal(size=attn_shape).astype(np.float16)

v_weights = np.random.normal(size=attn_shape).astype(np.float16)

def qkv_proj(

embeddings, q_weights, k_weights, v_weights

) -> tuple[np.ndarray, np.ndarray, np.ndarray]:

# -- Your Code --

...

Solution

def qkv_proj(

embeddings, q_weights, k_weights, v_weights

) -> tuple[np.ndarray, np.ndarray, np.ndarray]:

q = np.einsum('sd,dnh->snh', embeddings, q_weights)

k = np.einsum('sd,dnh->snh', embeddings, k_weights)

v = np.einsum('sd,dnh->snh', embeddings, v_weights)

return q, k, v

qkv_proj(embeddings, q_weights, k_weights, v_weights)

RoPE

At this point, our model has no way of knowing in which order the tokens appeared in the sequence. Furthermore, the attention mechanism does not inherently expose this information. Therefore, we need to tweak our embeddings according to their position in the sequence.

RoPE is a very common approach. It introduces an efficient trick to apply a rotation matrix with varying angles depending on the index in the sequence and the index in the vector.

We use the split variant for convenience. A common alternative is the interleaved variant.

\(RoPE\) encodes position information by rotating pairs of query and key vectors in a 2D plane. For a vector \(x\) at position \(m\), the rotated vector is computed as: \[\text{RoPE}(x, m) = x \cdot \cos(m\theta) + \text{rotate_half}(x) \cdot \sin(m\theta)\]

Where \(\text{rotate_half}\) swaps the components of pairs and negates the first one: \[\text{rotate_half} \begin{pmatrix} x_1 \\ x_2 \end{pmatrix} = \begin{pmatrix} -x_2 \\ x_1 \end{pmatrix}\]

\[\text{RoPE}(x, m) = x \cdot \cos(m\theta) + \text{rotate_half}(x) \cdot \sin(m\theta)\]

Let's implement \(RoPE\), we provide the code to generate \(\theta\).

def apply_rotary_emb(x):

dim = x.shape[-1]

# 1. Generate Theta

theta = 1.0 / (10_000 ** (np.arange(0, dim, 2) / dim))

# 2. Generate the positions m (indices of the tokens)

# 3. Multiply all indices with all theta (outer product of m and theta)

# 4. Apply cos and sin (separately) to outer product

# 5. Split x's last axis in 2 (we call the first half x1, the second x2)

# 6. We will now combine the output

# The first half (out1) is x1 * cos - x2 * sin

# The second half is x1 * sin + x2 * cos

# Think about broadcasting cos and sin first

# 7. Return the concatenation of out1 and out2 (np.concatenate)

# 8. Apply to q and k

q = apply_rotary_emb(q)

k = apply_rotary_emb(k)

Solution

def apply_rotary_emb(x):

dim = x.shape[-1]

# 1. Generate Theta

theta = 1.0 / (10_000 ** (np.arange(0, dim, 2) / dim))

# 2. Generate the positions m (indices of the tokens)

m = np.arange(x.shape[0])

# 3. Multiply all indices with all theta (outer product of m and theta)

freqs = m[:, None] * theta[None, :]

# 4. Apply cos and sin (separately) the the outer product

cos = np.cos(freqs)

sin = np.sin(freqs)

# 5. Split x's last axis in 2 (we call the first half x1, the second x2)

x1 = x[..., :dim // 2]

x2 = x[..., dim // 2:]

# 6. We will now combine the output

# The first half (out1) is x1 * cos - x2 * sin

# The second half is x1 * sin + x2 * cos

# Think about broadcasting cos and sin first

cos = cos[:, None, :]

sin = sin[:, None, :]

out1 = x1 * cos - x2 * sin

out2 = x1 * sin + x2 * cos

# 7. Return the concatenation of out1 and out2 (np.concatenate)

return np.concatenate([out1, out2], axis=-1)

# 8. Apply to q and k

q = apply_rotary_emb(q)

k = apply_rotary_emb(k)

Attention Scores

We have encoded all the necessary information into our \(q\) and \(k\) tensors. We can now multiply them together and apply softmax to the output.

\[\text{AttentionScores}(Q, K) = \text{softmax}\left(\frac{Q K^T}{\sqrt{d_k}}\right)\]

This is the most crucial part of the attention mechanism. To understand, let's take a look at the dimensions. \(q\) and \(k\) are both of shape (sequence, num_heads, head_dim). We contract the head dimension (head_dim) which means that our output will be of shape (sequence, num_heads, sequence). So we get sequence twice in our output. This means that we get a score for each \(q\) to each \(k\). When we normalize with softmax, the sum of the scores adds up to 1.

The last missing piece of the Attention Scores is the masking. A token cannot have access to a token that appeared after it in the sequence otherwise it would have access to the future. To remedy this, we simply mask the scores before the softmax. The mask looks like this:

\[M = \begin{bmatrix} 0 & -\infty & -\infty\\ 0 & 0 & -\infty \\ 0 & 0 & 0 \end{bmatrix}\]

The equation becomes

\[\text{AttentionScores}(Q, K) = \text{softmax}\left( \frac{Q K^T}{\sqrt{d_k}} + M \right)\]

Why \(-\infty\)? When we apply the exponential function during softmax (\(e^{-\infty}\)), the result becomes 0.

def softmax(x):

# Subtract max to prevent overflow

e_x = np.exp(x - np.max(x, axis=-1, keepdims=True))

return e_x / np.sum(e_x, axis=-1, keepdims=True)

def attention_scores(q, k):

# 1. Multiply q and k

# Output shape: (Seq_q, Batch, Seq_k)

# 2. Divide by the square root of head_dim

# 3. Generate the mask

# 4. Apply the mask

# 5. Return softmax

Solution

def softmax(x):

# Subtract max to prevent overflow

e_x = np.exp(x - np.max(x, axis=-1, keepdims=True))

return e_x / np.sum(e_x, axis=-1, keepdims=True)

def attention_scores(q, k):

# 1. Multiply q and k

qk = np.einsum('snh,tnh->snt', q, k)

# 2. Divide by the square root of head_dim

qk /= np.sqrt(q.shape[-1])

# 3. Generate the mask

seq = q.shape[0]

bool_mask = np.arange(seq)[:, None] >= np.arange(seq)[None, :]

mask = np.where(bool_mask, 0, -np.inf)

# 4. Apply the mask

qk += mask[:, None, :]

# 5. Return softmax

return softmax(qk)

qk @ v

We have scores for each key, query pair. We multiply the scores with the values. Since the scores add up to 1, we are essentially doing a weighted average of the values depending on the score for each key.

# Multiply qk and v

Solution

qkv = np.einsum('snt,tnh->snh', qk, v)

Final Attention Projection

Our activations now have shape (sequence, num_heads, head_dim). We want to go back to model_dim before applying the MLP. So we project back with learnt weights.

upproj_weights = np.random.normal(size=(num_heads, head_dim, model_dim)).astype(np.float16)

# Project qkv with upproj_weights

Solution

upproj_weights = np.random.normal(size=(num_heads, head_dim, model_dim)).astype(np.float16)

attention_out = np.einsum('snh,nhd->sd', qkv, upproj_weights)

Residual and Normalization

Finally, for better gradient flow and to constrain the latent space, we apply a residual connection. We simply add the output of the attention mechanism to the original input. Furthermore, we normalize the output to prevent the gradients from exploding.

In real LLMs, we usually normalize before attention, and then again before MLP. We did it this way for convenience.

\[x_{out} = \text{RMS_Norm}(x + \text{Attention}(x))\]

The Formula: \[x_{norm} = \frac{x}{\text{RMS}(x)} \cdot \gamma\]

Where: \[\text{RMS}(x) = \sqrt{\frac{1}{d} \sum_{i=1}^{d} x_i^2}\]

# 1. Residual (add the output of attention back to the original input)

def rms_norm(x, gamma=1.0):

# 2. Implement rms square root of the sum of the squares of x

# on the last dimension

# 3. Apply RMS

Solution

# 1. Residual (add the output of attention back to the original input)

x = embeddings

x += attention_out

def rms_norm(x, gamma=1.0):

# 2. Implement rms square root of the sum of the squares of x

# on the last dimension

d_model = x.shape[-1]

rms = np.sqrt(np.sum(x ** 2, axis=-1, keepdims=True) / d_model)

return gamma * x / rms

# 3. Apply RMS

x = rms_norm(x)

Multi Layer Perceptron (MLP)

After attention, we run a (usually) 2 layers MLP separated by an activation function in between. Here, we run a classic \[x + \text{ReLU}(x W_0) W_1\]

We also run another normalization after. Again, this would typically be before in a real world use case.

Where:

\[ReLu(x) = \max(0, x)\]

We introduce a new dimension, the hidden dimension (hidden_dim) that we set to 4 * model_dim

hidden_dim = 4 * model_dim

w0 = np.random.normal(size=(model_dim, hidden_dim)).astype(np.float16)

w1 = np.random.normal(size=(hidden_dim, model_dim)).astype(np.float16)

def mlp(x, w0, w1):

# Your code.

x = mlp(x, w0, w1)

x = rms_norm(x)

Solution

hidden_dim = 4 * model_dim

w0 = np.random.normal(size=(model_dim, hidden_dim)).astype(np.float16)

w1 = np.random.normal(size=(hidden_dim, model_dim)).astype(np.float16)

def mlp(x, w0, w1):

y = np.einsum('bd,df->bf', x, w0)

y = np.maximum(y, 0)

return np.einsum('bf,fd->bd', y, w1) + x

x = mlp(x, w0, w1)

x = rms_norm(x)

Putting it all together

Click to expand

import numpy as np

sequence_length = 256

vocab_size = 32768

model_dim = 512

# - EMBEDDINGS -

# (sequence_length,)

input_token_ids = np.random.randint(0, vocab_size, size=(sequence_length,))

# (vocab_size, model_dim)

vocab = np.random.normal(size=(vocab_size, model_dim)).astype(np.float16)

def embeddings_lookup(input_ids, vocab) -> np.ndarray:

return vocab[input_ids]

# (sequence_length, model_dim)

embeddings = embeddings_lookup(input_token_ids, vocab)

# - ATTENTION -

# -- Attention Projections --

num_heads = 4

head_dim = 64

attn_shape = (model_dim, num_heads, head_dim)

q_weights = np.random.normal(size=attn_shape).astype(np.float16)

k_weights = np.random.normal(size=attn_shape).astype(np.float16)

v_weights = np.random.normal(size=attn_shape).astype(np.float16)

def qkv_proj(

embeddings, q_weights, k_weights, v_weights

) -> tuple[np.ndarray, np.ndarray, np.ndarray]:

q = np.einsum('sd,dnh->snh', embeddings, q_weights)

k = np.einsum('sd,dnh->snh', embeddings, k_weights)

v = np.einsum('sd,dnh->snh', embeddings, v_weights)

return q, k, v

q, k, v = qkv_proj(embeddings, q_weights, k_weights, v_weights)

# -- RoPE --

def apply_rotary_emb(x):

dim = x.shape[-1]

# 1. Generate Theta

theta = 1.0 / (10_000 ** (np.arange(0, dim, 2) / dim))

# 2. Generate the positions m (indices of the tokens)

m = np.arange(x.shape[0])

# 3. Multiply all indices with all theta (outer product of m and theta)

freqs = m[:, None] * theta[None, :]

# 4. Apply cos and sin (separately) the the outer product

cos = np.cos(freqs)

sin = np.sin(freqs)

# 5. Split x's last axis in 2 (we call the first half x1, the second x2)

x1 = x[..., :dim // 2]

x2 = x[..., dim // 2:]

# 6. We will now combine the output

# The first half (out1) is x1 * cos - x2 * sin

# The second half is x1 * sin + x2 * cos

# Think about broadcasting cos and sin first

cos = cos[:, None, :]

sin = sin[:, None, :]

out1 = x1 * cos - x2 * sin

out2 = x1 * sin + x2 * cos

# 7. Return the concatenation of out1 and out2 (np.concatenate)

return np.concatenate([out1, out2], axis=-1)

# 8. Apply to q and k

q = apply_rotary_emb(q)

k = apply_rotary_emb(k)

# -- Attention Scores --

def softmax(x):

# Subtract max to prevent overflow

e_x = np.exp(x - np.max(x, axis=-1, keepdims=True))

return e_x / np.sum(e_x, axis=-1, keepdims=True)

def attention_scores(q, k):

# 1. Multiply q and k

qk = np.einsum('snh,tnh->snt', q, k)

# 2. Divide by the square root of head_dim

qk /= np.sqrt(q.shape[-1])

# 3. Generate the mask

seq = q.shape[0]

bool_mask = np.arange(seq)[:, None] >= np.arange(seq)[None, :]

mask = np.where(bool_mask, 0, -np.inf)

# 4. Apply the mask

qk += mask[:, None, :]

# 5. Return softmax

return softmax(qk)

qk = attention_scores(q, k)

# -- qk @ v --

qkv = np.einsum('snt,tnh->snh', qk, v)

# -- Final Attention Proj --

upproj_weights = np.random.normal(size=(num_heads, head_dim, model_dim)).astype(np.float16)

attention_out = np.einsum('snh,nhd->sd', qkv, upproj_weights)

# -- Residual and RMS norm --

x = embeddings

x += attention_out

def rms_norm(x, gamma=1.0):

# 2. Implement rms square root of the sum of the squares of x

# on the last dimension

d_model = x.shape[-1]

rms = np.sqrt(np.sum(x ** 2, axis=-1, keepdims=True) / d_model)

return gamma * x / rms

# 3. Apply RMS

x = rms_norm(x)

# - MLP -

hidden_dim = 4 * model_dim

w0 = np.random.normal(size=(model_dim, hidden_dim)).astype(np.float16)

w1 = np.random.normal(size=(hidden_dim, model_dim)).astype(np.float16)

def mlp(x, w0, w1):

y = np.einsum('bd,df->bf', x, w0)

y = np.maximum(y, 0)

return np.einsum('bf,fd->bd', y, w1) + x

x = mlp(x, w0, w1)

x = rms_norm(x)

ML Compilers

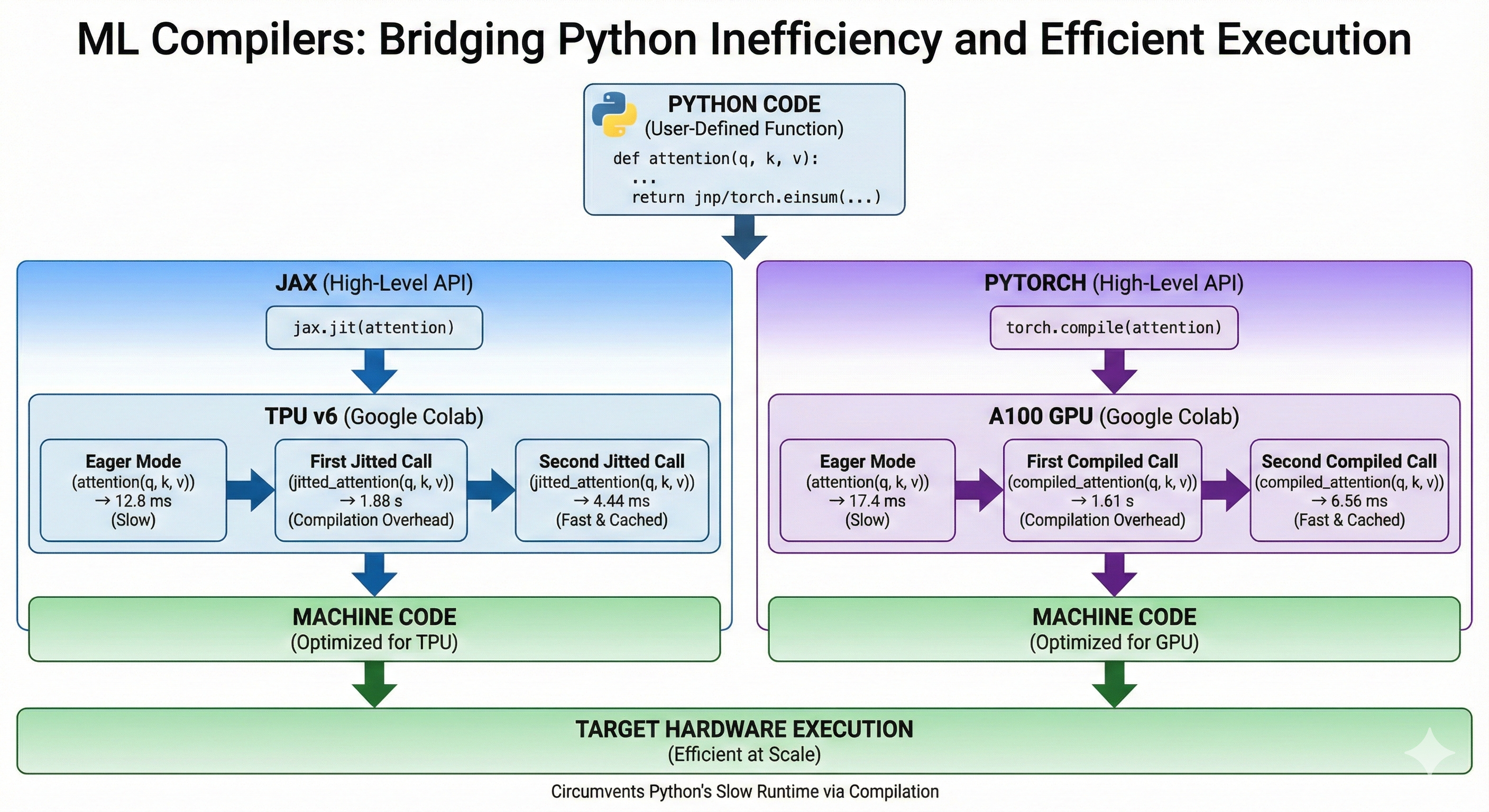

Python is a particularly inefficient programming language. Yet, it is used almost ubiquitously to develop massive models and deploy them efficiently at scale. How come?

ML frameworks circumvent Python's slow runtime by compiling the model's code into machine code for the target architecture just like the Rust compiler would. This allows us to write efficient code despite python.

High Level APIs

In this chapter, we will cover both Jax and PyTorch. They both provide an API to compile a Python function and make it more efficient. For now, we will only showcase the APIs. It the later subchapters, we will dive into the differences between Jax and PyTorch compilation processes and the different optimizations that the ML compilers perform.

Let's implement the attention mechanism in both Jax and PyTorch to demonstrate how the API works at a high level.

Jax

Jax offers the jax.jit method that takes a python function and a set of abstract inputs and compiles an optimized method for the function, inputs pair. Abstract inputs are composed of a dtype and a shape. The first call to the jitted function is slow because it needs to perform the compilation, the subsequent calls are very fast because the compilation is cached. Calling the same method with inputs of different dtype or shape will trigger a recompilation.

We are running this on a TPU v6 in Google Colab. We use block_until_ready otherwise, Jax would not wait for the computations to be complete on TPU before yielding back control to the CPU.

from jax import numpy as jnp

import jax

def attention(q, k, v):

qk = jnp.einsum('btnh,bsnh->btns', q, k)

scores = jax.nn.softmax(qk, axis=-1)

return jnp.einsum('btns,bsnh->btnh', scores, v)

jitted_attention = jax.jit(attention)

Weights initialization

key_q, key_k, key_v = jax.random.split(jax.random.PRNGKey(0), 3)

shape = (32, 1024, 16, 256)

# Automatically on TPU in Jax

q = jax.random.normal(key_q, shape, dtype=jnp.bfloat16)

k = jax.random.normal(key_k, shape, dtype=jnp.bfloat16)

v = jax.random.normal(key_v, shape, dtype=jnp.bfloat16)

Runtime in Eager Mode

%%time

out = attention(q, k, v).block_until_ready()

stdout

Wall time: 12.8 ms

First jitted call

%%time

out = jitted_attention(q, k, v).block_until_ready()

stdout

Wall time: 1.88 s

Second jitted call

stdout

Wall time: 4.44 ms

PyTorch

PyTorch uses the torch.compile method. At a high level, it is very similar to jax.jit. Notice how similar the code is.

We are running this on an A100 in Google Colab.

import torch

def attention(q, k, v):

qk = torch.einsum('btnh,bsnh->btns', q, k)

scores = torch.nn.functional.softmax(qk, dim=-1)

return torch.einsum('btns,bsnh->btnh', scores, v)

compiled_attention = torch.compile(attention)

Weights Initialization

# Explicitly set default device to GPU

device = torch.device("cuda")

shape = (32, 1024, 16, 256)

generator = torch.Generator(device=device).manual_seed(0)

q = torch.randn(shape, generator=generator, device=device, dtype=torch.bfloat16)

k = torch.randn(shape, generator=generator, device=device, dtype=torch.bfloat16)

v = torch.randn(shape, generator=generator, device=device, dtype=torch.bfloat16)

Runtime Eager Mode

%%time

out = attention(q, k, v)

# Equivalent of block_until_ready

torch.cuda.synchronize()

stdout

Wall time: 17.4 ms

First Compiled Call

%%time

out = compiled_attention(q, k, v)

# Equivalent of block_until_ready

torch.cuda.synchronize()

stdout

Wall time: 1.61 s

Second Compiled Call

stdout

Wall time: 6.56 ms

Why not just use another language?

There are efforts to create new languages for ML. For instance Julia which seems to have lost its momentum and Chris Lattner's Mojo which is too recent to tell.

The reasons Python is so commonly used in the ML community are mostly historical and cultural. The language has been around for more than 30 years, so it has a lot of mature and stable libraries that are commonly taught in universities. It is also easy to pick up and play with, making it ideal for quick iterations in research environments. At this point, Python's adoption is not about its inherent qualities but mostly about network effects, which are extremely difficult to compete against.

Jax vs PyTorch

While Jax's and PyTorch's APIs look similar, they handle compilation very differently. This matters a lot when writing code in either library and when thinking about performance.

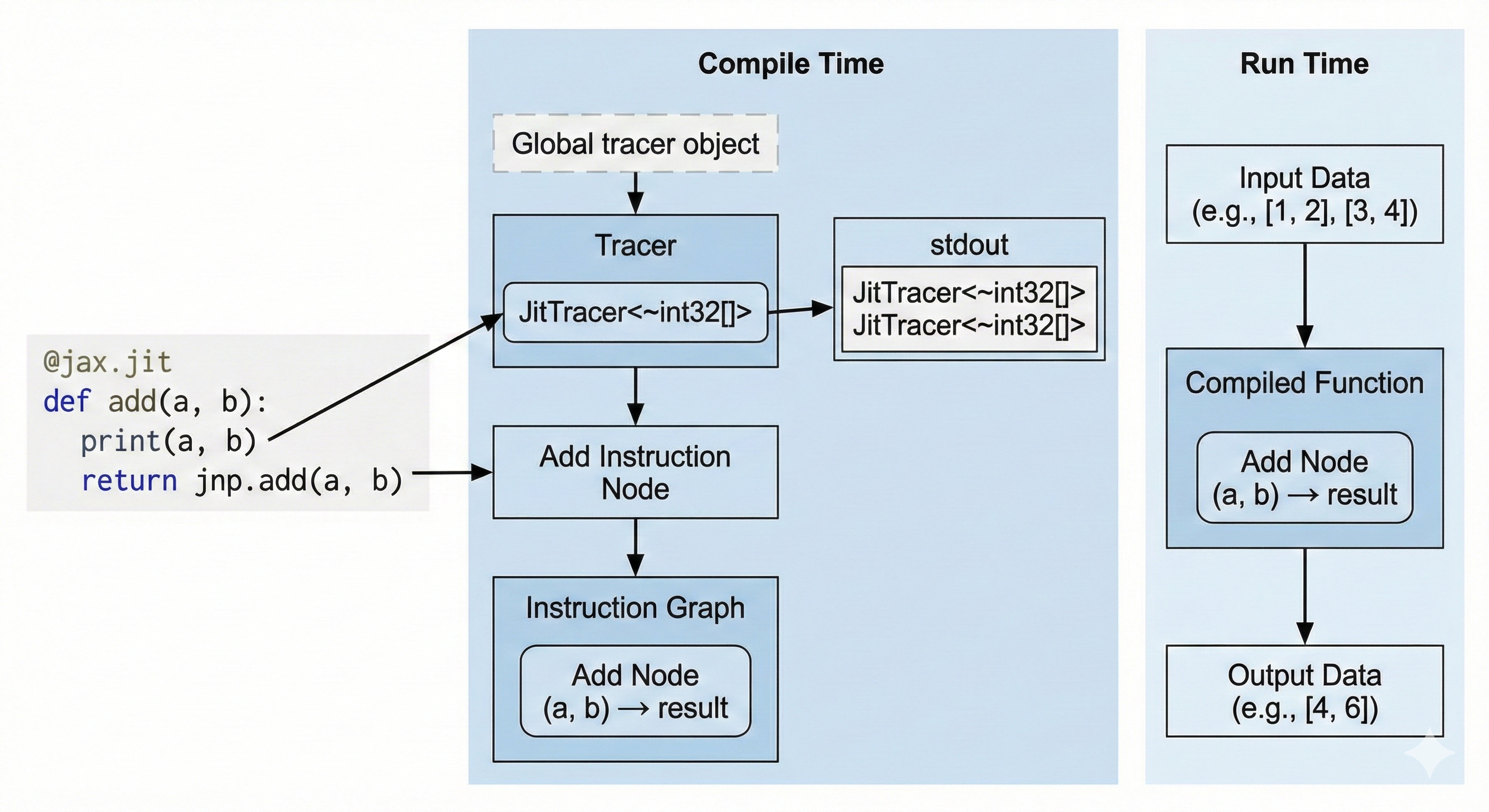

Tracing (Jax)

Jax uses the tracing approach. When we we compile a Python method using jax.jit, we set a global variable called the Tracer. When our Python code encounters a Jax method, it appends an instruction to the global Tracer. At the end of our function, the Tracer has a full graph of instructions that it finally compiles.

This means that:

- The only code that will be compiled will be the

Jaxmethods we encountered during compilation. If..elsestatements andfor loopsare only evaluated at compile time and their evaluation will be constant at run time.- Runtime dependent control flow has to be implemeted using

JaxAPIs likejax.lax.condandjax.lax.fori_loop.

Another particularity is that jax.jit will compile your method for a specific set of input shapes and dtypes. Changing your input shape will force a recompilation of the program.

Furthermore, jitted methods are purely functional. We cannot mutate a value in-place. Performance Note: Although the API is functional (create new arrays), the compiler optimizes this into in-place updates under the hood, so you don't lose performance.

Let's illustrate what this means:

Printing (Jax)

import jax

@jax.jit

def add(a, b):

print(a, b)

return a + b

add(1, 2)

add(3, 4)

stdout

JitTracer<~int32[]> JitTracer<~int32[]>

The print statement is not a Jax method, so it only prints at compile time. We only have one stdout line even though we called the method twice because it only printed during compilation and the second call is cached. If we wanted to print actual runtime values, we would use jax.debug.print.

Runtime If Statement (Jax)

import jax

@jax.jit

def conditional(a, b):

if a > b:

return a

return jnp.exp(b)

conditional(3, 4)

stderr

TracerBoolConversionError:

Attempted boolean conversion of traced array with shape bool[].

We attempted to use a runtime value in an if statement, resulting in a compile-time error. We can fix this using jax.lax.cond to ensure that the Tracer knows about the if statement and compiles it.

import jax

import jax.numpy as jnp

@jax.jit

def conditional(a, b):

return jax.lax.cond(a > b, lambda: a, lambda: jnp.exp(b))

# (Using floats because a and exp(b) must have the same type)

conditional(1., 2.)

Static Arguments

We can define static arguments to be passed to the method. These arguments will not be traced, however they can be used for control flow during compilation.

Let's look at this code:

from functools import partial

import jax

import jax.numpy as jnp

@partial(jax.jit, static_argnames=('add_residuals',))

def linear_layer(x, w0, add_residuals: bool = False):

y = x @ w0

# add_residuals is static so it can be used in the `if` statement

if add_residuals:

return x + y

return y

x = jnp.ones((32, 128))

w0 = jnp.ones((128, 128))

When we compile the method with add_residuals = False, the Tracer never sees the x + y operation, so it never gets compiled and the Tracer never knows this line of code existed. If you call the function again with add_residuals = True, Jax MUST recompile the whole function.

We can even pass functions or complex objects as static arguments!

from typing import Callable

@partial(jax.jit, static_argnames=('activation',))

def linear_layer(x, w0, activation: Callable[[jax.Array], jax.Array] | None = None):

y = x @ w0

if activation:

return activation(y)

return y

x = jnp.ones((32, 128))

w0 = jnp.ones((128, 128))

linear_layer(x, w0, jax.nn.relu)

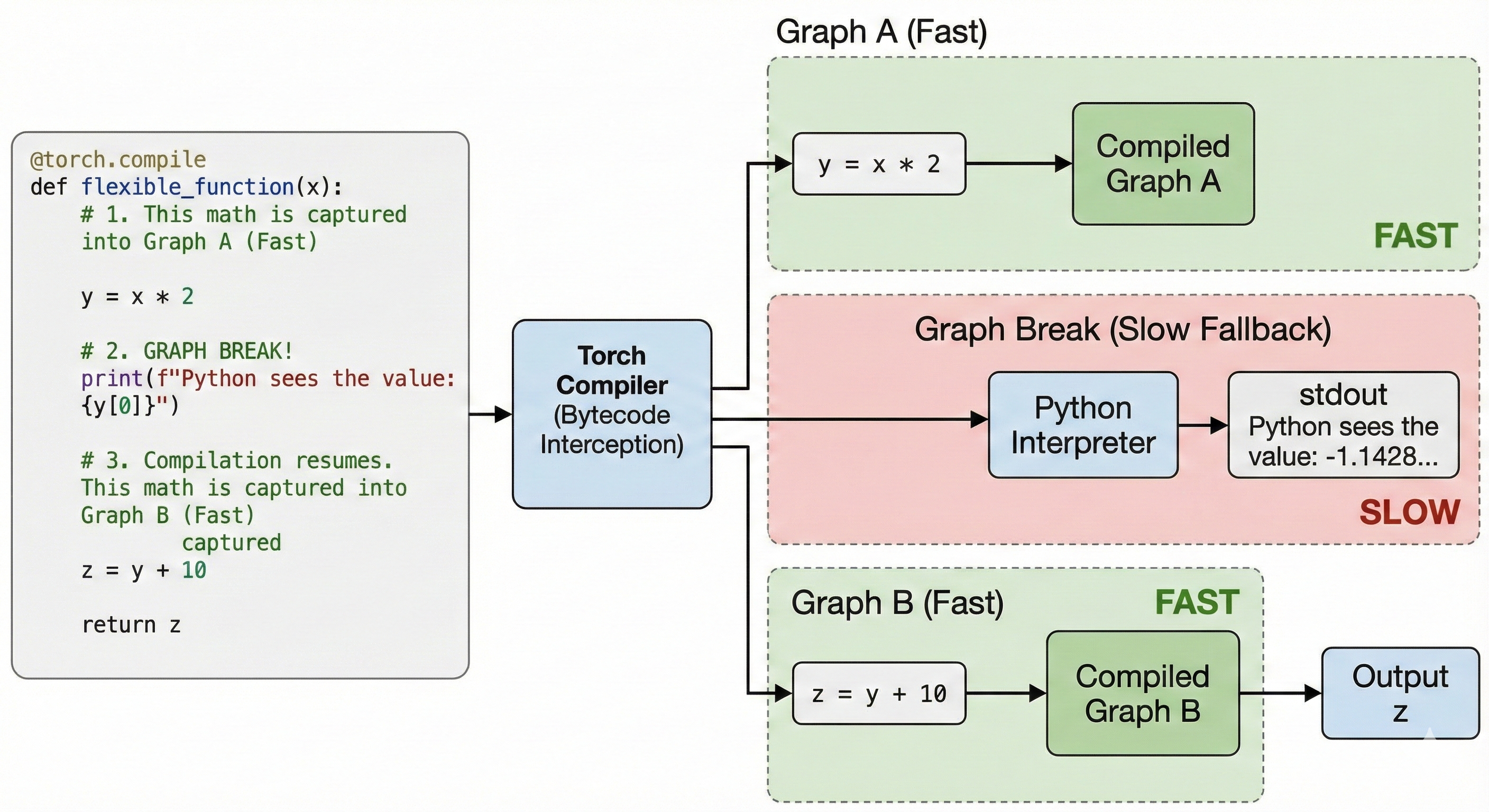

Bytecode Interception (PyTorch)

PyTorch's approach puts less weight on the developer. Any method that works in eager mode will also work with torch.compile. This is achieved by intercepting Python's bytecode and dynamically modifying it right before execution. This throws all of Jax's limitations out of the window.

Some Python operations cannot be compiled directly by torch.compile. For instance print or numpy calls. When torch.compile encounters these operations, it falls back to Python; we call this a Graph Break. Graph Breaks are slow and should be kept to a minimum to reach maximum performance.

Printing (PyTorch)

import torch

@torch.compile

def flexible_function(x):

# 1. This math is captured into Graph A (Fast)

y = x * 2

# 2. GRAPH BREAK!

# The compiler pauses. Python executes this print.

print(f"Python sees the value: {y[0]}")

# 3. Compilation resumes. This math is captured into Graph B (Fast)

z = y + 10

return z

x = torch.randn(5)

flexible_function(x)

stdout

Python sees the value: -1.1428250074386597

We print the runtime value at the cost of a graph break.

Runtime If Statement (PyTorch)

import torch

@torch.compile

def conditional(a, b):

if a.sum() > b.sum():

return a

return torch.exp(b)

a = torch.randn(5)

b = torch.randn(5)

conditional(a, b)

This code compiles without errors unlike Jax. However, it introduces a Graph Break. We can fix it by staying in graph with an API like torch.where.

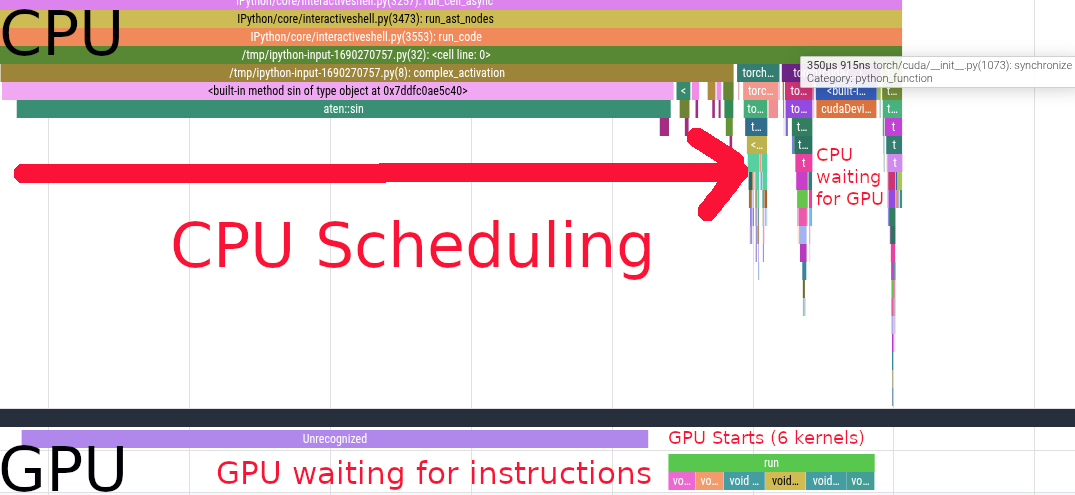

Eager Mode

We need to first understand Eager Mode to understand how compilation improves perfomance on top of it.

Eager Mode is the standard execution model of Jax and PyTorch. When you run ML code without torch.compile or jax.jit, you are executing code on the CPU in the Python runtime, this code sends instructions to the GPU or TPU that actually performs the computations.

When you chain multiple operations one after the other, the CPU doesn't wait for the GPU/TPU to complete its task, it takes the next operation and already schedule it concurrently to the GPU/TPU's execution. When all operations have been scheduled, the CPU waits for the GPU/TPU's work to be over.

Let's have a look at this PyTorch function running on GPU.

import torch

from torch.profiler import profile, record_function, ProfilerActivity

def complex_activation(x, y):

# Eager PyTorch launches 6 separate kernels for this:

# Read/Write memory 6 times!

a = torch.sin(x)

b = torch.cos(y)

c = a * b

d = c + x

e = d * y

return torch.relu(e)

The Bottleneck: In Eager Mode, every line of code above requires reading data from the GPU's memory (HBM), computing, and writing the result back. We are constantly moving data back and forth, which is often slower than the math itself.

We profile it using the torch profiler

N = 20 * 1024 * 1024

x = torch.randn(N, device='cuda')

y = torch.randn(N, device='cuda')

torch.cuda.synchronize()

# Warmup

complex_activation(x, y)

torch.cuda.synchronize()

with profile(

activities=[ProfilerActivity.CPU, ProfilerActivity.CUDA],

record_shapes=True,

with_stack=True

) as prof:

with record_function(f"run"):

complex_activation(x, y)

torch.cuda.synchronize()

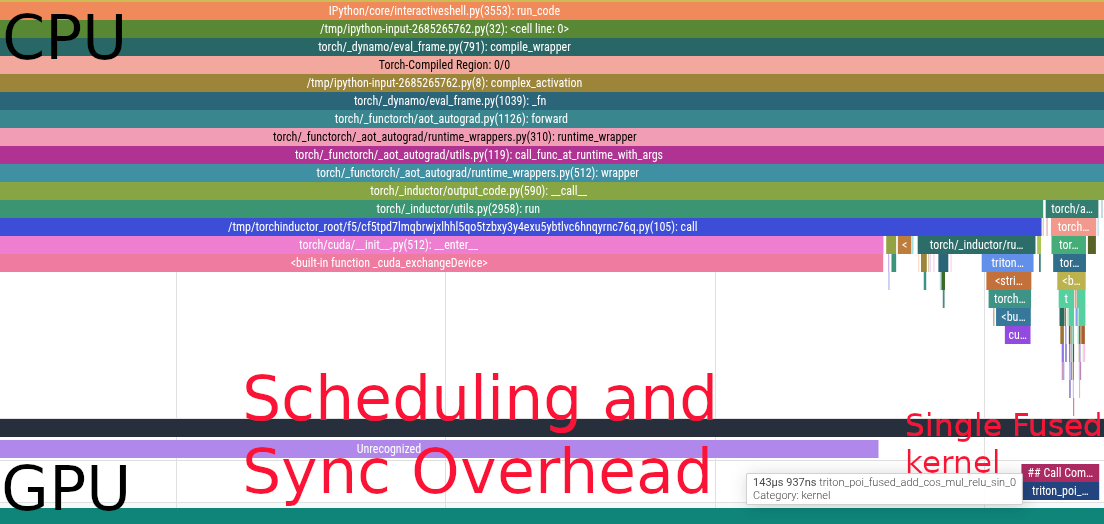

We see the CPU scheduling the first sin call. Scheduling the first kernel is very slow because of the synchronization overhead. After that, we see a bunch of other kernels being scheduled on the CPU side, those are cos, a * b, c + x, d * y and relu.

We see that the GPU starts working while the CPU is still scheduling instructions. After scheduling, the CPU starts waiting for the GPU to complete its work. The GPU executes 6 different kernels in \(731\mu s\).

Optimizations

When running code in Eager Mode, PyTorch and Jax see function calls one at a time. They cannot reason holistically about how these functions interact together. So while each individual function is written optimally, the transitions between functions are suboptimal.

On the contrary, jax.jit and torch.compile allow their compilers to see the whole code ahead of time. This gives them the opportunity to fuse, rewrite, and eliminate code optimally.

Kernel Fusions

Let's take another look at the code from the previous chapter.

def complex_activation(x, y):

# Eager PyTorch launches 6 separate kernels for this:

# Read/Write memory 6 times!

a = torch.sin(x)

b = torch.cos(y)

c = a * b

d = c + x

e = d * y

return torch.relu(e)

opt_activation = torch.compile(complex_activation)

In Eager Mode, we scheduled 6 different kernels one after the other. This means that the GPU had to load from HBM the whole array each time and then write it back in between kernels.

When we compile the code, PyTorch fuses all the kernels, allowing the GPU to load the arrays x and y from HBM once, and applying all the computations once from fast memory.

Here's the flame graph

We now have a single kernel instead of 6. Bringing the latency down from \(731\mu s\) to \(144\mu s\).

Buffer Reuse

When an array is read once and not needed after, ML compilers can reuse the memory space instead of constantly reallocating. This saves both memory and latency by increasing cache locality.

In the previous example, compilers need to allocate for x and y. Then

- We have to allocate

aandbbecausexandyare needed later a(orb) can be overwritten withcbecause they are not reusedxcan be overwritten withdycan be overwrittenewhich can be muttated in place before returning

Relayout

ML compilers are able to reason about the best layout for your operations. For instance, the MXU on the TPU operates on array of shape \((8, 128)\) at least. If you have an einsum with an input shape \((64, 4, 1024)\), the 4 being a non contracted batch dimension like the number of heads in attention, jax.jit will transpose your array to \((4, 64, 1024)\) under the hood, making full use of the MXU.

Dead Code Elimination

Sometimes, we write code that is not needed for anything. It is not returned, nor does it have any effects (like print). This code is completely safe to be removed from the program.

For instance, in this example the x @ gating1 call is absent from the flame graph

def mlp(x, gating0, gating1, linear):

y = x @ gating0

# x @ gating1 is never accessed, this computation is useless

# it is eliminated by the compiler

unused = x @ gating1

y = torch.nn.functional.relu(y)

out = y @ linear

return out

compiled_mlp = torch.compile(mlp)

device = torch.device("cuda")

generator = torch.Generator(device=device).manual_seed(0)

b = 512

d = 2048

f = 2 * d

x = torch.randn((b, d), generator=generator, device=device, dtype=torch.bfloat16)

gating0 = torch.randn((d, f), generator=generator, device=device, dtype=torch.bfloat16)

gating1 = torch.randn((d, f), generator=generator, device=device, dtype=torch.bfloat16)

linear = torch.randn((f, d), generator=generator, device=device, dtype=torch.bfloat16)

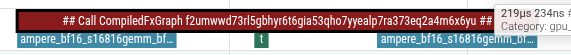

There are only 2 gemm kernel calls

Backward Pass

ML frameworks implement auto differentiation for us, so we usually do not need to implement the backward pass ourselves. Nonetheless, it is important to understand how the gradients are propagated to be able to reason about memory usage and the computational overhead of the backprop.

What are the Forward and the Backward Pass?

The Forward pass is the "main formula" of the model. It is the primary computation of the model, transforming inputs to outputs. It is executed during both inference (to get predictions) and training (to compute the loss).

The Backward Pass (Backpropagation) is the chain rule of calculus applied in reverse. It computes the gradient of the loss function with respect to every parameter in the model. These gradients indicate the direction and magnitude to adjust each parameter to minimize error.

The forward pass produces predictions. During training, these predictions are compared to a ground truth value to calculate a loss. The backward pass produces gradients, or directions for each parameter in the model. The gradients are consumed by the optimizer to update the weights used in both the forward and the backward pass.

How is it computed?

Most functions in ML are differentiable (or have defined subgradients for points like x=0 in ReLU). Therefore, when ML frameworks developers implement a function, they implement its forward and backward methods.

A basic example using jax.grad, the derivative of the Sine function sin is simply the Cosine cos. When we apply jax.grad to jnp.sin, jax will internally call jnp.sin.backward (not the exact internal name), which is jnp.cos.

import jax.numpy as jnp

grad_sin = jax.grad(jnp.sin)

grad_sin(0.2) == jnp.cos(0.2)

Let's take a look at a more complex example:

import jax

import jax.numpy as jnp

key0, key1, key2 = jax.random.split(jax.random.key(0), 3)

b, d, f = 16, 64, 32

x = jax.random.normal(key0, (b, d))

w_0 = jax.random.normal(key1, (d, f))

w_1 = jax.random.normal(key2, (f, d))

def mlp(args):

x, w_0, w_1 = args

z = x @ w_0

z_relu = jax.nn.relu(z)

out = z_relu @ w_1

return 0.5 * jnp.sum(out ** 2)

grad_mlp = jax.grad(mlp)

# jax.grad only returns the gradients of the first argument

# so we pass all our arguments as a tuple

grad_mlp((x, w_0, w_1))

This is a classic 2 layers MLP with a ReLU activation in between. \[\text{ReLU}(x W_0) W_1\]

- The

Forward Passsimply executes the code we wrote. - The

Backward Passtakes the output of theForward Passand executes the backward methods in reverse order by propagating gradients backward. - Some derivatives require the original activation from theForward passso we need to individually store them during theforward call.

Walking through the backward pass

0.5 * jnp.sum(out ** 2)The derivative is simplyoutout = z_relu @ w_1Here we need to compute the gradients ofw_1which will be used to updatew_1by the optimizer and the gradients ofz_reluthat will be backpropagated.dL/dW1 = z_relu.T @ grads((b, f).T @ (b, d) -> (f, d))dL/dZ_relu = grads @ w1.T((b, d) @ (f, d).T -> (b, d))

z_relu = jax.nn.relu(z)ReLUis defined asrelu(x) = max(0, x). Its derivative is therefored_relu(x) = 0 if x <= 0 else 1. We then multiply the derivative with the gradients.- Performance Note: Storing values in

HBM(High Bandwidth Memory) is expensive. For element-wise operations like ReLU, it is often faster torecomputethe activation during the backward pass using the cached input (z) rather than storing the output (z_relu) and reading it back. This is known asactivation recomputationorrematerialization.

- Performance Note: Storing values in

z = x @ w_0. Just like for layer 1:dL/dW0 = x.T @ grads((b, d).T @ (b, f) -> (d, f))dL/dx = grads @ w0.T((b, f) @ (d, f).T -> (b, d))

First let's rewrite the MLP implementation to cache the intermediate activations:

def mlp_activations(x, w_0, w_1):

activations = [x]

z = x @ w_0

activations.append(z)

z_relu = jax.nn.relu(z)

activations.append(z_relu)

out = z_relu @ w_1

activations.append(out)

return 0.5 * jnp.sum(out ** 2), activations

Now let's implement the backward pass:

def manual_mlp_grad(x, w_0, w_1):

# Forward

_, activations = mlp_activations(x, w_0, w_1)

# Pop out, shape (b, d)

out = activations.pop()

# 1. Derivative dL/dOut = out

grads = out

# 2. Derivative of Layer 1

z_relu = activations.pop()

# dL/dW1

grads_w_1 = z_relu.T @ grads

# dL/dZ_relu

grads_z_relu = grads @ w_1.T

# 3. Derivative of ReLU

z = activations.pop()

grads_z = jnp.where(z > 0, 1, 0) * grads_z_relu

# 4. Derivative of Layer 0

x = activations.pop()

# dL/dW0

grads_w_0 = x.T @ grads_z

# dL/dx

grads_x = grads_z @ w_0.T

return grads_x, grads_w_0, grads_w_1

Correctness check:

import numpy as np

manual_out = manual_mlp_grad(x, w_0, w_1)

jax_out = grad_mlp((x, w_0, w_1))

for manual, autograd in zip(manual_out, jax_out):

np.testing.assert_allclose(manual, autograd)

Performance Implications

Flops

As we have seen, each matrix multiplication in the forward pass requires two matrix multiplications in the backward pass. Hence, the number of flops in the backward pass can easily be approximated as twice the flops of the forward pass.

Memory Usage

Since we need to store the intermediate activations during the forward pass, our model requires a lot more memory during training than during inference. A common rule of thumb is that Training memory is ~3x-4x Inference memory for the same batch size, primarily due to the need to store these intermediate activations. Furthermore, constantly writing and reading previous activations saturates the memory bandwidth which slows down prefetching of other parameters.

It is crucial to study which activations are being cached, and actively find opportunities for recomputations when appropriate. Either to free up memory or to speed up the step time.

On-Chip Parallelism

Machine Learning workloads require more and more computational power as we scale the number of parameters, the context lengths, and the amount of data we ingest. At the same time, chip design has hit a plateau; it is getting prohibitively expensive to increase the number of operations a chip can do per second. Furthermore, memory latency and bandwidth have not been keeping up with the increases in compute speed, implying that computational power cannot be fully leveraged because the data cannot be moved as fast as it is being processed. We cannot rely on faster chips, so we instead rely on the chips doing more at the same time either by doing multiple operations at once or having multiple cores working together in parallel.

Sequential execution model

Traditional chips were thought of as having two main blocks; the memory (RAM) and the Central Processing Unit (CPU.)

Traditional software is usually written with this implied model:

- Load some scalars from RAM to the CPU

- Do some operations on the CPU

- Write back the output of those operations to RAM

- Repeat for the next instruction

While this model is great and allowed us to write most of the software running the world today; it has long become incoherent with the way chips actually process data. We let the compilers and the chips themselves rewrite our code to make better use of the actual capabilities of the hardware; mostly through different levels of parallelism and better memory access patterns.

The different levels of on-chip parallelism

Modern chips are all inherently parallel. Whether they are GPUs, TPUs, or modern CPUs. They also all feature different types of parallelism that need to be exploited to maximize the chip's utilisation. Exploiting these mechanisms is not always explicit because compilers are reasonably good at leveraging target architectures's features. Some chips are also capable of rewriting the machine code they receive before executing it.

IO parallelism

The processing unit and the memory are two independent units. Therefore, the processor is able to perform computations independently of the memory reads and writes. For instance, it can request some data from RAM as well as perform an addition between two numbers it has already loaded while waiting for the data to be received. This means we can potentially completely overlap computation times with memory movements. In our execution model, steps 1, 2, and 3 can all be executed in parallel.

Single Instruction Multiple Data (SIMD)

Most modern chips are capable of executing a single instruction on multiple elements at once. This can mean adding two vectors with one another in one cycle, reducing (ie. summing) a vector into a scalar, or even running a matrix dot product within specialized Arithmetic Logic Units (ALUs) in TPUs' and GPUs' tensor cores.

- Modern x86 chips feature AVX registers

- TPUs have MXUs for matrix dot products, VPUs for elementwise vectorized operations, and XLUs for reductions

- GPUs have tensor cores for matrix dot products

Coming back to our execution model. Instead of executing one operation at a time on scalars, we instead perform as many operations as we can in parallel within a SIMD unit and also load more data at once since our registers are larger.

Instruction Level Parallelism

As we have mentioned, modern chips possess multiple circuits that specialize in the handling of different data types and operations. For instance, TPUs have MXUs and VPUs. Some of these circuits can also be used independently. For instance, we could compute a dot product on the MXU, and apply a ReLU activation at the same time on the VPU (more specifically, perform a dot product, write the output to the VPU, do the next dot product at the same time as we apply ReLU on the VPU.)

Multiple threads of execution

Finally, modern architectures usually feature multiple processing units that can execute operations independently of one another. This is the main differentiator of GPUs which possess thousands of cores that can all execute operations in parallel on different data addresses. This model comes with additional complexities such as the need to synchronize data across cores safely and efficiently.

Coming back to the original model, we now execute the model multiple times in parallel.

Comparison of On-Chip Parallelism

| Parallelism Type | Core Concept | ⚙️ Hardware Example | 💻 Software Abstraction | 👤 Who Implements This? |

|---|---|---|---|---|

| IO parallelism | Hiding memory latency by performing computation while waiting for data to be fetched. | GPU warp schedulers swapping threads stalled on memory reads; hardware prefetchers. | Optimized kernels (e.g., in cuDNN, XLA). | Chip Hardware (schedulers) & Compiler (instruction scheduling). |

| SIMD (Single Instruction, Multiple Data) | One instruction operating on many data elements (a vector) at once. | GPU Tensor Cores (for matrices), TPU MXUs, CPU AVX registers. | Vectorized code (e.g., a + b on tensors), torch.matmul. | Compiler / Library (e.g., cuDNN, XLA). The programmer enables this by using high-level vector/matrix ops. |

| Instruction-Level Parallelism (Using Multiple ALUs) | Using different, specialized execution units (ALUs) within a core at the same time. | A TPU pipelining work from its MXU (matrix) to its VPU (vector). | Kernel Fusion (e.g., matmul + relu in one operation). | Compiler (e.g., jax.jit, XLA). The chip hardware makes it possible. |

| Multithreading / Multicore (MIMD / SIMT) | Multiple processing units (cores) executing instructions independently. | Multi-core CPU (MIMD), thousands of CUDA Cores on a GPU (SIMT). | Data Parallelism (splitting a batch over cores) or Model Parallelism. | Programmer & Library (e.g., CUDA, which manages threads for kernels). |

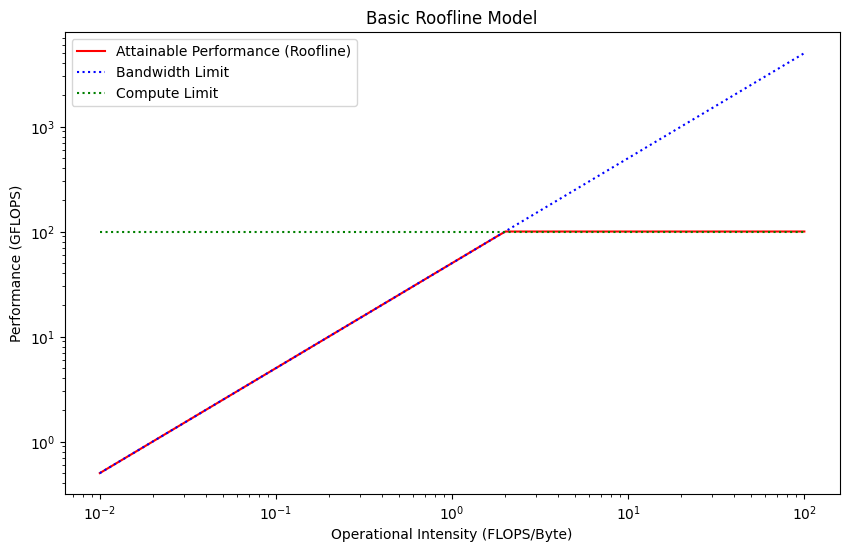

Estimating Performance

We want to answer the following question: "Given my code and my chip, what is the fastest theoretical time this function should take?"

We can simply model it, by assuming we can overlap all the components involved in the operation (Memory, Tensor Cores, etc), the theoretical fastest time is going to be the time of the slowest component.

How to Estimate our Performance?

We need to figure out which part of the chip will be taking the largest amount of time.

Therefore, we need:

- How much time will it take the ALUs to execute all computations?

- How long will we spend loading the data from the main memory to the ALUs?

- What is the maximum of these two values?

Let's start with 1. Typically, we will estimate a simple dot product. The flops of a dot product are computed as such:

for an mk,kn->mn dot product

flops = m * k * n * 2

Now we need to divide the number of flops by the theoretical limit of the machine to get the peak theoretical compute performance.

compute_seconds = flops / flops_per_second(chip)

Now, let's compute the time it will take to load the memory onto the ALUs and to write the output back.

let's call the left hand side "lhs" and the right hand side "rhs".

total_memory_bytes = (m * k * bytes_per_element_lhs) + (k * n * bytes_per_element_rhs) + (m * n * bytes_out)

The time it should take to load the memory will be

memory_time_seconds = total_memory_bytes / memory_bandwidth_seconds(chip)

Our theoretical run time will be:

max(compute_seconds, memory_time_seconds)

Practical Example